nivek

As Above So Below

Rise of the Machines - Has technology evolved beyond our control?

Across the sciences and society, in politics and education, in warfare and commerce, new technologies are not merely augmenting our abilities, they are actively shaping and directing them, for better and for worse.

If we do not understand how complex technologies function then their potential is more easily captured by selfish elites and corporations. The results of this can be seen all around us.

There is a causal relationship between the complex opacity of the systems we encounter every day and global issues of inequality, violence, populism and fundamentalism.

Instead of a utopian future in which technological advancement casts a dazzling, emancipatory light on the world, we seem to be entering a new dark age characterized by ever more bizarre and unforeseen events.

The Enlightenment ideal of distributing more information ever more widely has not led us to greater understanding and growing peace, but instead seems to be fostering social divisions, distrust, conspiracy theories and post-factual politics.

To understand what is happening, it’s necessary to understand how our technologies have come to be, and how we have come to place so much faith in them.

The Machines are Learning to Keep their Secrets

Researchers at Google Brain set up three networks called Alice, Bob and Eve. Their task was to learn how to encrypt information. Alice and Bob both knew a number – a key, in cryptographic terms – that was unknown to Eve. Alice would perform some operation on a string of text, and then send it to Bob and Eve.

If Bob could decode the message, Alice’s score increased; but if Eve could, Alice’s score decreased. Over thousands of iterations, Alice and Bob learned to communicate without Eve breaking their code: they developed a private form of encryption like that used in private emails today. But crucially, we don’t understand how this encryption works. Its operation is occluded by the deep layers of the network. What is hidden from Eve is also hidden from us.

Google Translate was known for its humorous errors, but in 2016, the system started using a neural network developed by Google Brain, and its abilities improved exponentially. Rather than simply cross-referencing heaps of texts, the network builds its own model of the world, and the result is not a set of two-dimensional connections between words, but a map of the entire territory. In this new architecture, words are encoded by their distance from one another in a mesh of meaning – a mesh only a computer could comprehend.

While a human can draw a line between the words “tank” and “water” easily enough, it quickly becomes impossible to draw on a single map the lines between “tank” and “revolution”, between “water” and “liquidity”, and all of the emotions and inferences that cascade from those connections. The map is thus multidimensional, extending in more directions than the human mind can hold. As one Google engineer commented, when pursued by a journalist for an image of such a system: “I do not generally like trying to visualise thousand-dimensional vectors in three-dimensional space.” This is the unseeable space in which machine learning makes its meaning. Beyond that which we are incapable of visualizing is that which we are incapable of even understanding.

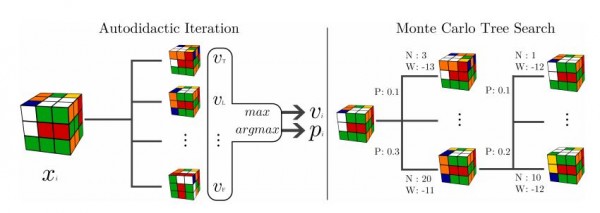

AlphaGO

By the time the Google Brain–powered AlphaGo software took on the Korean professional Go player Lee Sedol in 2016, something had changed. In the second of five games, AlphaGo played a move that stunned Sedol, placing one of its stones on the far side of the board. “That’s a very strange move,” said one commentator. “I thought it was a mistake,” said another. Fan Hui, a seasoned Go player who had been the first professional to lose to the machine six months earlier, said: “It’s not a human move. I’ve never seen a human play this move.”

AlphaGo went on to win the game, and the series. AlphaGo’s engineers developed its software by feeding a neural network millions of moves by expert Go players, and then getting it to play itself millions of times more, developing strategies that outstripped those of human players. But its own representation of those strategies is illegible: we can see the moves it made, but not how it decided to make them.

The question then becomes, what would a rogue algorithm or a flash crash look like in the wider reality?

Would it look, for example, like Mirai, a piece of software that brought down large portions of the internet for several hours on 21 October 2016?

When researchers dug into Mirai, they discovered it targets poorly secured internet connected devices – from security cameras to digital video recorders – and turns them into an army of bots. In just a few weeks, Mirai infected half a million devices, and it needed just 10% of that capacity to cripple major networks for hours.

Mirai, in fact, looks like nothing so much as Stuxnet, another virus discovered within the industrial control systems of hydroelectric plants and factory assembly lines in 2010. Stuxnet was a military-grade cyberweapon; when dissected, it was found to be aimed specifically at Siemens centrifuges, and designed to go off when it encountered a facility that possessed a particular number of such machines. That number corresponded with one particular facility: the Natanz nuclear facility in Iran. When activated, the program would quietly degrade crucial components of the centrifuges, causing them to break down and disrupt the Iranian enrichment programme. The attack was apparently partially successful, but the effect on other infected facilities is unknown.

To this day, despite obvious suspicions, nobody knows where Stuxnet came from, or who made it.

Nobody knows for certain who developed Mirai, either, or where its next iteration might come from, but it might be there, right now, breeding in the CCTV camera in your office, or the wifi-enabled kettle in the corner of your kitchen.

How we understand and think of our place in the world, and our relation to one another and to machines, will ultimately decide where our technologies will take us.

.

Across the sciences and society, in politics and education, in warfare and commerce, new technologies are not merely augmenting our abilities, they are actively shaping and directing them, for better and for worse.

If we do not understand how complex technologies function then their potential is more easily captured by selfish elites and corporations. The results of this can be seen all around us.

There is a causal relationship between the complex opacity of the systems we encounter every day and global issues of inequality, violence, populism and fundamentalism.

Instead of a utopian future in which technological advancement casts a dazzling, emancipatory light on the world, we seem to be entering a new dark age characterized by ever more bizarre and unforeseen events.

The Enlightenment ideal of distributing more information ever more widely has not led us to greater understanding and growing peace, but instead seems to be fostering social divisions, distrust, conspiracy theories and post-factual politics.

To understand what is happening, it’s necessary to understand how our technologies have come to be, and how we have come to place so much faith in them.

The Machines are Learning to Keep their Secrets

Researchers at Google Brain set up three networks called Alice, Bob and Eve. Their task was to learn how to encrypt information. Alice and Bob both knew a number – a key, in cryptographic terms – that was unknown to Eve. Alice would perform some operation on a string of text, and then send it to Bob and Eve.

If Bob could decode the message, Alice’s score increased; but if Eve could, Alice’s score decreased. Over thousands of iterations, Alice and Bob learned to communicate without Eve breaking their code: they developed a private form of encryption like that used in private emails today. But crucially, we don’t understand how this encryption works. Its operation is occluded by the deep layers of the network. What is hidden from Eve is also hidden from us.

Google Translate was known for its humorous errors, but in 2016, the system started using a neural network developed by Google Brain, and its abilities improved exponentially. Rather than simply cross-referencing heaps of texts, the network builds its own model of the world, and the result is not a set of two-dimensional connections between words, but a map of the entire territory. In this new architecture, words are encoded by their distance from one another in a mesh of meaning – a mesh only a computer could comprehend.

While a human can draw a line between the words “tank” and “water” easily enough, it quickly becomes impossible to draw on a single map the lines between “tank” and “revolution”, between “water” and “liquidity”, and all of the emotions and inferences that cascade from those connections. The map is thus multidimensional, extending in more directions than the human mind can hold. As one Google engineer commented, when pursued by a journalist for an image of such a system: “I do not generally like trying to visualise thousand-dimensional vectors in three-dimensional space.” This is the unseeable space in which machine learning makes its meaning. Beyond that which we are incapable of visualizing is that which we are incapable of even understanding.

AlphaGO

By the time the Google Brain–powered AlphaGo software took on the Korean professional Go player Lee Sedol in 2016, something had changed. In the second of five games, AlphaGo played a move that stunned Sedol, placing one of its stones on the far side of the board. “That’s a very strange move,” said one commentator. “I thought it was a mistake,” said another. Fan Hui, a seasoned Go player who had been the first professional to lose to the machine six months earlier, said: “It’s not a human move. I’ve never seen a human play this move.”

AlphaGo went on to win the game, and the series. AlphaGo’s engineers developed its software by feeding a neural network millions of moves by expert Go players, and then getting it to play itself millions of times more, developing strategies that outstripped those of human players. But its own representation of those strategies is illegible: we can see the moves it made, but not how it decided to make them.

The question then becomes, what would a rogue algorithm or a flash crash look like in the wider reality?

Would it look, for example, like Mirai, a piece of software that brought down large portions of the internet for several hours on 21 October 2016?

When researchers dug into Mirai, they discovered it targets poorly secured internet connected devices – from security cameras to digital video recorders – and turns them into an army of bots. In just a few weeks, Mirai infected half a million devices, and it needed just 10% of that capacity to cripple major networks for hours.

Mirai, in fact, looks like nothing so much as Stuxnet, another virus discovered within the industrial control systems of hydroelectric plants and factory assembly lines in 2010. Stuxnet was a military-grade cyberweapon; when dissected, it was found to be aimed specifically at Siemens centrifuges, and designed to go off when it encountered a facility that possessed a particular number of such machines. That number corresponded with one particular facility: the Natanz nuclear facility in Iran. When activated, the program would quietly degrade crucial components of the centrifuges, causing them to break down and disrupt the Iranian enrichment programme. The attack was apparently partially successful, but the effect on other infected facilities is unknown.

To this day, despite obvious suspicions, nobody knows where Stuxnet came from, or who made it.

Nobody knows for certain who developed Mirai, either, or where its next iteration might come from, but it might be there, right now, breeding in the CCTV camera in your office, or the wifi-enabled kettle in the corner of your kitchen.

How we understand and think of our place in the world, and our relation to one another and to machines, will ultimately decide where our technologies will take us.

.